Gartner's AI Inference Cost Drop Headline Has a Footnote That Changes the Budget Story

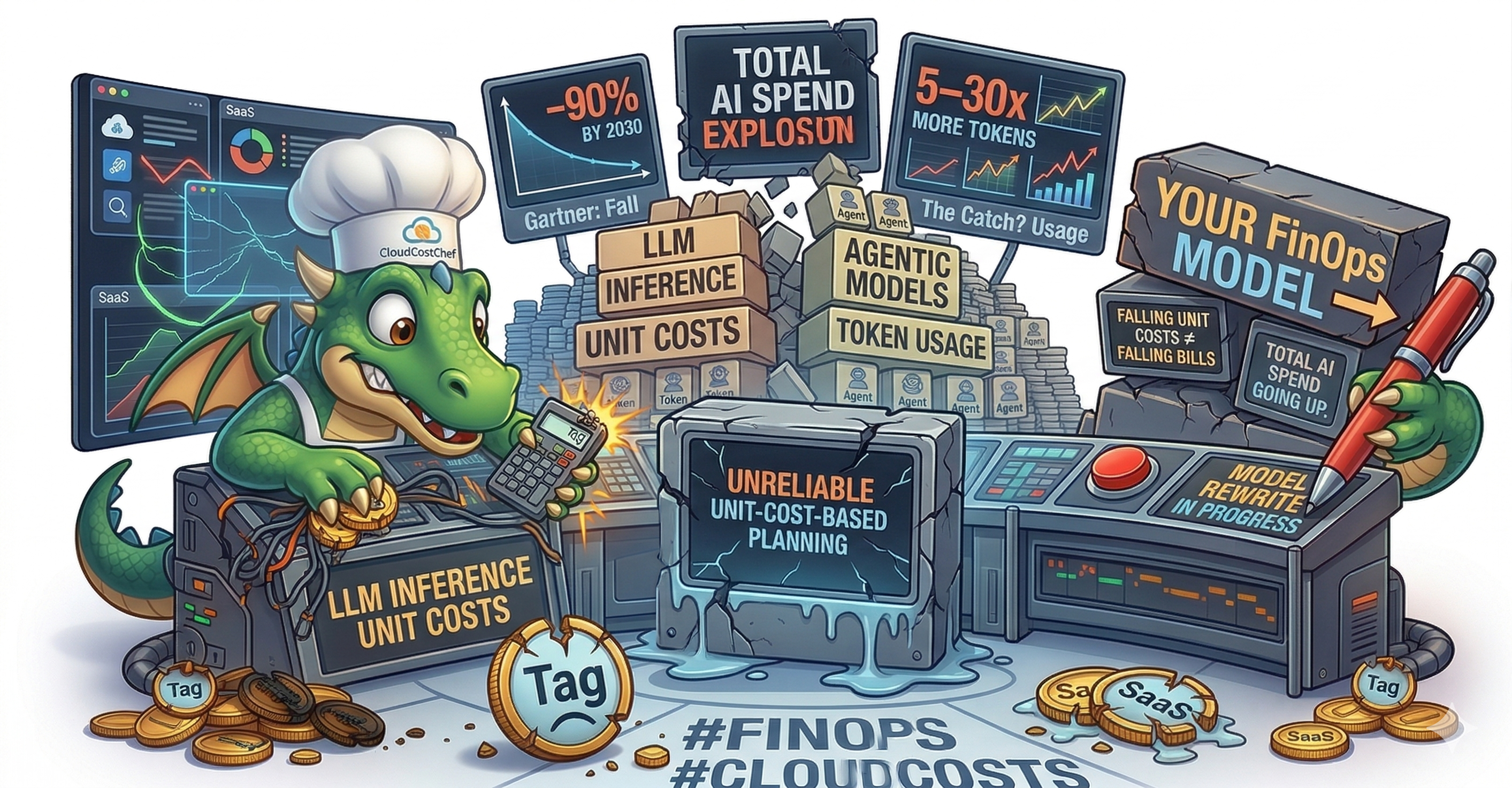

The boardroom headline says inference gets dramatically cheaper by 2030. The operational footnote says agentic token demand can scale even faster than price declines. FinOps teams that ignore that second line will miss spend targets.

Freshness & Review

Reviewed recently

The Headline vs. the Footnote

Gartner analysts reported a strong long-run decline in unit inference economics for very large models through 2030. The same analysis warns that enterprise AI spend can still rise because agentic systems consume significantly more tokens and run continuously.

The Math Behind the Paradox

Lower cost-per-token only helps if demand stays stable. Agentic workflows change demand itself. They execute multi-step plans, call tools repeatedly, and keep running in the background, which increases total token throughput per unit of business work.

| Scenario | Unit cost change | Token demand change | Total spend effect |

|---|---|---|---|

| Traditional chatbot usage | -90% | 1x baseline | Usually down |

| Agentic workflow (mid-range) | -90% | 10x baseline | Flat to up |

| Agentic workflow (high range) | -90% | 30x baseline | Up materially |

This is why “AI gets cheaper” can coexist with “AI budget keeps rising.” Price curves and demand curves are moving in opposite directions.

Why FinOps Teams Should Treat Tokens Like Utility Consumption

Seat-based budgeting fails for autonomous workloads. Agents execute when events occur, when schedules run, or when downstream systems trigger them. That is closer to utility metering than software licensing. Token burn becomes your new kWh.

Old default

Seats x price

Useful for SaaS licensing, weak for autonomous inference

New reality

Tokens x workflow volume x model tier

Closer to the actual cost function

FinOps priority

Demand governance

Control token volume before variance appears in finance reports

Four Moves to Make Now

1. Budget AI as utility demand, not as a static line item

Forecast token throughput by workflow class and environment. Track variance weekly, not quarterly.

2. Tier workloads by model class and business value

Commodity tasks to small or domain-specific models. Frontier reasoning only where value justifies premium cost.

3. Improve cost visibility at operation level

AWS now supports finer-grained Bedrock billing visibility in Cost and Usage Report data. Use operation-level dimensions to avoid lumping all AI spend into one blob.

4. Build governance before autonomous volume arrives

Set model routing policy, token quotas, escalation thresholds, and owner accountability now. Doing this after scale-up is expensive and slow.

Practical CloudCostChefs Controls

Cost allocation discipline

Make AI usage attributable to teams and workflows before billing disputes appear.

Cost Allocation Best PracticesContinuous optimization loop

Move from monthly reporting to recurring detection and correction.

Continuous Optimization Best PracticesForecasting risk to watch

Provider unit prices may drop faster than before, but not all efficiency gains are immediately passed through. FinOps planning should assume partial pass-through and focus on demand-side controls you can actually enforce.

Bottom line

Falling inference unit cost is good news. Treating that headline as a reason to relax governance is not. The teams that model agentic token demand now will outperform when AI volume compounds.

FAQ

If inference gets cheaper, why can AI spend still rise?

Because enterprise demand is shifting toward agentic workflows that run continuously and consume far more tokens per business process than a simple chat prompt. Lower unit cost can still produce higher total cost when usage volume grows faster.

Why should FinOps teams treat tokens like utility consumption?

Tokens behave like variable throughput, not fixed seats. Cost scales with activity level, model choice, workflow loops, and autonomous execution patterns, so governance should track token demand and budget burn like electricity usage.

What is the fastest control to prevent AI cost overruns?

Model tiering with policy gates. Route commodity tasks to small models, reserve expensive frontier models for high-value reasoning, and enforce approval thresholds for premium model calls.

What changed with AWS Bedrock cost visibility?

AWS added operation-level visibility for Amazon Bedrock usage in Cost and Usage Report data, enabling more precise attribution and analysis than provider-level aggregate reporting.

New ingredient in the kitchen. Read the label before you cook with it.

Sources & Accuracy Notes

Reviewed on March 28, 2026. Gartner's March 25, 2026 token-demand and unit-cost framing is referenced through analyst coverage and Gartner materials where direct note text is not publicly indexed. AWS Bedrock visibility references come from AWS primary documentation and announcements.

- Gartner analyst forecast (March 25, 2026 client note context)Gartner • Accessed Mar 28, 2026

Used for the unit-cost decline framing and agentic token-demand multiplier interpretation referenced in this article. The underlying Gartner analyst note is not fully publicly indexed.

- Gartner press release: GenAI cost-per-resolution pressure (Jan 26, 2026)Gartner • Accessed Mar 28, 2026

Used for Gartner analyst commentary that efficiency gains do not automatically translate into equivalent customer-side savings.

- Gartner press release: AI-optimized IaaS spending growth (Oct 15, 2025)Gartner • Accessed Mar 28, 2026

Used as supporting context that AI infrastructure and inference spending are expected to keep expanding despite falling unit economics.

- AWS Cost and Usage Report: lineItem columnsAWS Documentation • Accessed Mar 28, 2026

Used to support operation-level and usage-type cost attribution guidance in CUR-driven FinOps workflows.

- Amazon Bedrock User GuideAWS Documentation • Accessed Mar 28, 2026

Used for Bedrock model-usage context and service-level inference workflow references.

- Cost Allocation Best PracticesCloudCostChefs • Accessed Mar 28, 2026

Referenced for internal showback and workload-level AI cost accountability controls.

- Continuous Optimization Best PracticesCloudCostChefs • Accessed Mar 28, 2026

Referenced for recurring detection and governance loops as agentic usage scales.